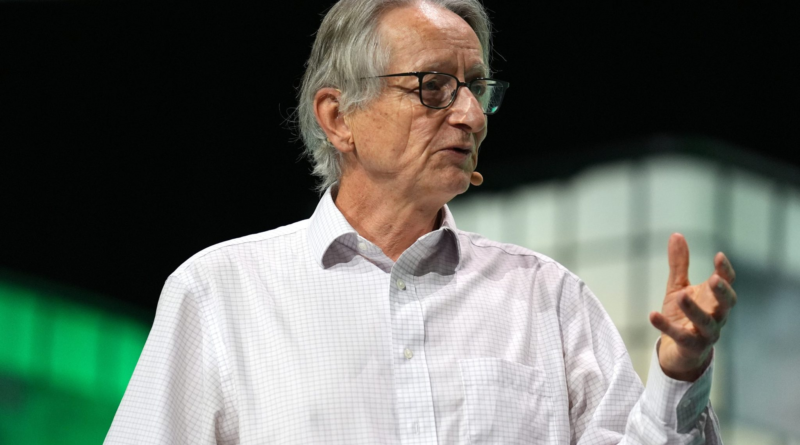

Nobel laureate Geoffrey Hinton is both AI pioneer and front man of alarm

Geoffrey Hinton is a walking paradox—an archetype of a certain kind of brilliant scientist.

Hinton’s renown was solidified on Tuesday when he won the Nobel Prize in physics, alongside the American scientist John Hopfield, for discovering neural networks and the computer pathways that led to modern-day breakthroughs in AI. However, in recent years he has come to be defined by a contradiction: The discovery that led to his acclaim is now a source of ceaseless concern.

Over the past year, Hinton, dubbed “the godfather of AI,” has repeatedly and emphatically warned about the dangers the technology unleashed by his discovery could cause. In his role as both Prometheus and Cassandra, Hinton, like many scientists of legend, was caught between the human desire to achieve and the humanist impulse to reflect on the consequences of one’s actions. J. Robert Oppenheimer and Albert Einstein grappled torturously with the destruction their atomic research caused. Alfred Nobel, the inventor of dynamite, became so distraught over what his legacy might be that he started a foundation to award the eponymous prize that Hinton won.

“I can’t see a path that guarantees safety,” Hinton told 60 Minutes in 2023. “We’re entering a period of great uncertainty, where we’re dealing with things we’ve never dealt with before.”

Much of Hinton’s worry stems from the belief that humanity knows frighteningly little about artificial intelligence—and that machines may outsmart humans. “These things could get more intelligent than us and could decide to take over, and we need to worry now about how we prevent that happening,” he said in an interview with NPR.

Originally from England, Hinton spent much of his professional life in the U.S. and Canada. It was at the University of Toronto where he reached a major breakthrough that would become the intellectual foundation for many contemporary uses of AI. In 2012, Hinton and two grad students (one of whom was Ilya Sutskever, the former chief scientist at OpenAI) built a neural network that could identify basic objects in pictures. Google eventually bought a company Hinton had started based on the tech for $44 million. Hinton then worked at Google for 10 years before retiring in 2023 to free himself from any corporate constraints that may have limited his ability to warn the public about AI. (Hinton did not respond to a request for comment.)

Hinton feared the rate of progress in AI as much as anything else. “Look at how it was five years ago and how it is now,” Hinton told the New York Times last year. “Take the difference and propagate it forwards. That’s scary.”

Concerning him was the potential for AI models to teach each other new information that only one model may have learned, which could be done with considerably greater efficiency than humans, according to Hinton.

“Whenever one [model] learns anything, all the others know it,” Hinton said in 2023. “People can’t do that. If I learn a whole lot of stuff about quantum mechanics, and I want you to know all that stuff about quantum mechanics, it’s a long, painful process of getting you to understand it.”

Among Hinton’s more controversial views is that AI can, in fact, “understand” the things it is doing and saying. If true, this fact could shatter much of the conventional wisdom about AI. The consensus is that AI systems don’t necessarily know why they’re doing what they’re doing, but rather are programmed to produce certain outputs based on prompts they are given.

Hinton is careful to say in public statements that AI is not self-aware, as humans are. Rather, the mechanisms by which AI systems learn, improve, and ultimately produce certain outputs mean they must comprehend what they’re learning. The impetus for Hinton sounding the alarm was when he asked a chatbot to accurately explain why a joke he had made up was funny, according to Wired. That a chatbot could understand the subtleties of humor and then convey them clearly in its own words was revelatory in Hinton’s view.

As humanity races toward a finish line that virtually none understand, Hinton fears that control of AI may slip through humanity’s fingers. He envisions a scenario in which AI systems will write code to alter their own learning protocols and hide from humans. In a Shakespearean twist, they’ll have learned how to do so precisely from our own flaws.

“They will be able to manipulate people,” Hinton told 60 Minutes in October 2023. “They will be very good at convincing people, because they’ll have learned from all the novels that were ever written, all the books by Machiavelli, all the political connivances, they’ll know all that stuff.”

Data Sheet: Stay on top of the business of tech with thoughtful analysis on the industry’s biggest names.

Sign up here.